The Case for Precision Learning, not Personalized Learning.

A few weeks ago, I published a piece in The 74 about a topic I’ve been mulling over for years: How can we move beyond the weak and often muddled past attempts at personalized learning toward a targeted, evidence-centered approach to learning akin to what’s been happening for years in the medical field around precision medicine?

A billboard in Seattle sponsored by the acclaimed Fred Hutchinson Cancer Research Center in Seattle sparked this idea. It says, “We treat your cancer like it’s YOUR cancer.” I couldn’t stop thinking about what it would mean if a school said the same thing. “We treat your learning like it’s YOUR learning.” Not as a tagline, but as an actual operating commitment.

So, my essay argues that generative AI may allow us to finally achieve precision learning, a term I’m using deliberately to distinguish it from personalized learning. I’ve spent years studying personalized learning schools through CRPE, and the research is not flattering. What promised to be a revolution in meeting individual student needs too often became self-paced software, digital playlists, and low expectations wearing the costume of student agency.

Precision learning says: the vision underlying personalized learning was right, but the execution was weak, and here’s a more serious version of it.

What makes places like Fred Hutch and other precision medicine centers genuinely transformative isn’t just the science. It’s the systems: shared clinical protocols, team-based decision making, relentless feedback loops between research and practice. AI-assisted tools are catching aggressive cancers earlier. Genetic mutations that were previously unexplained are now being modeled and forecasted. This is what happens when a field refuses to accept that generic approaches are adequate for serious problems.

AI gives education a real shot at something similar. Not because the technology is magic, but because, for the first time, it makes genuine diagnostic precision feasible at scale. It can surface a student’s specific learning gaps, recommend targeted interventions, and, critically, track whether those interventions are actually working. That last part is where so much ed tech has historically failed. Tools get adopted, but results don’t get measured, and the cycle repeats.

I want to be clear about something I tried to emphasize in the piece: the information alone does nothing. AI embedded in professional workflows, guiding adults toward evidence-based actions, is powerful. But if AI generates reports that nobody reads, then the additional data isn’t helping anyone.

Which gets to the part of the argument that’s most important and most uncomfortable: precision learning isn’t really a technology story. It’s a systems and accountability story. Personalized learning asked teachers to adapt. Precision learning asks leaders to fundamentally change how decisions get made, how professionals are trained, and how schools are organized. In a real precision model, you’d stop asking one teacher to diagnose, instruct, remediate, counsel, and motivate 30 kids. You’d build instructional teams instead, with different adults each specializing in diagnostics, instruction, and mentorship. AI would support those teams without replacing humans or papering over a broken structure.

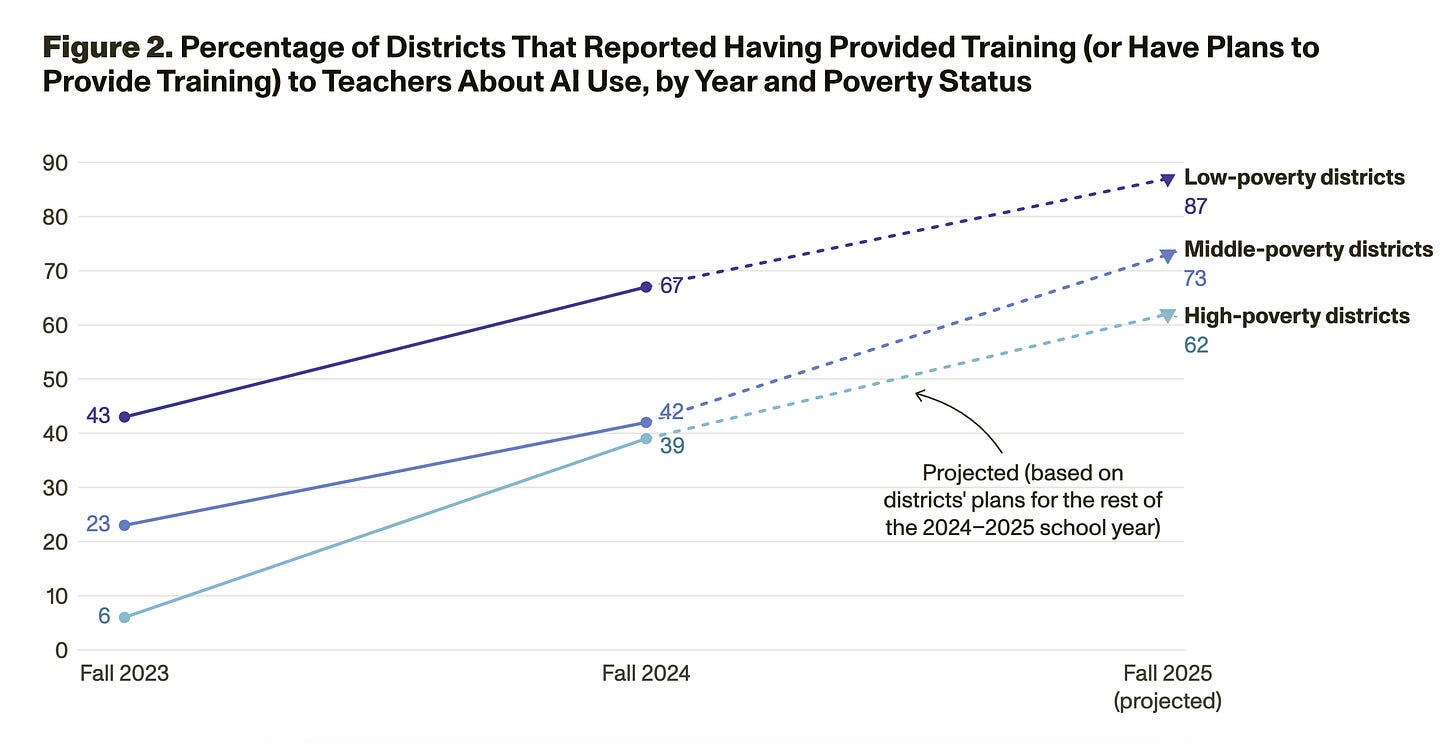

I also wrote about equity. Precision medicine started as an elite treatment before insurance and policy systems made it broadly available. Education cannot afford that same trajectory. The gap in AI access and training between high-poverty and well-resourced districts is already real and already growing. Precision learning that reaches only affluent schools is not progress. It’s just the same old story with better branding.

We won’t achieve precision learning on our current path. AI has a weak evidence base. There are not many examples of schools and school systems integrating AI with a coherent and integrated approach to address individual student needs. Policy solutions around AI rarely address more than basic risk factors like privacy and cheating.

The piece calls on states to build precision learning consortia, realign accountability systems, and rethink the role of the teacher in ways that go well beyond typical reform conversations. It calls on ed tech developers to actually embed learning science into their tools rather than just claiming they do. And it floats the idea of a reimagined Institute of Education Sciences leading a national effort to map what I call the “learning genome”: a shared, living knowledge base of what works, for whom, and under what conditions.

These are big asks, I know.

But here’s what I keep coming back to: in medicine, good intentions have to be paired with evidence, standards, and genuine accountability for doctors and researchers to arrive at treatments that work. While we accept this as obvious in healthcare, we have never fully accepted it in education, and students pay the price every single day.

We have the science, and increasingly, we have the technology. But we’re still missing the will and the infrastructure to use them seriously, for every student, not just the ones lucky enough to be born into the right zip code.

The full piece ran in The 74 last week, and below is a podcast with Mike Petrilli on CRPE’s recent work. I’d love to know what you think, especially those of you working inside schools and districts right now or at the policy level. Is this framing useful? Is it missing something important? Add a comment below or write to me.

New AI tool and Policy Developments

A new tool called Einstein can log in to the Canvas LMS and complete all of the work in a college course, including participating in online discussions, completing homework, and taking tests. The founder, Advait Paliwal, a 22-year-old Brown University dropout, created the tool to make the case for amping up AI literacy for faculty. Paliwal hopes this tool will demonstrate that current teaching models are outdated and require fundamental rethinking.

I agree. We should not be shocked by this technology or this tool. We should instead be busy thinking—both in higher ed and K-12—about how AI can help make education more interesting, challenging, customized, and relevant to students.

From EdWeek: Real-Time Data Shows Exactly How Students Use AI on School Technology

Securly, a company that offers internet and AI safety services, analyzed 1.2 million actual student-AI interactions in 1,300 districts from December 2025 to February 2026. Their analysis showed that students are primarily using AI tools from big tech (e.g., ChatGPT and Google Gemini), even on school technology. AI edtech tools, including MagicSchool, SchoolAI, and Brisk Teaching, made up only 9% of use.

Securly also flags inappropriate student use. Only 2% of interactions were flagged, and the vast majority (95%) of these were students trying to use AI to complete their work. Other violations involved games, sexual content, firearms, gambling, drugs, and hate. Securely’s CEO said that students will test boundaries, but if districts put policies and guardrails in place, then most students will abide by them.

States and AI Education Policy

From CRPE: States and AI: An Early Look at How Early Adopters Are Approaching AI in Education

From my AI research colleagues: CRPE’s new State AI Early Adopter Database compiles publicly available information on AI-related actions across 20 early adopter states in 2024 and 2025, including guidance, legislation, professional learning initiatives, pilot programs, and partnerships. The analysis brief offers an initial, non-evaluative scan of the landscape, documenting how Early Adopter states are navigating AI amid uncertainty, limited capacity, and a rapidly evolving policy environment. Some key findings:

Flexibility over directives. Most states are issuing non-binding guidance and updating it frequently—signaling priorities while preserving local control.

Professional learning comes first. Nearly all early adopter states are investing in AI literacy and educator capacity-building.

Pilots before scale. About half of the states are testing AI tools through pilots rather than mandating adoption.

Partnerships drive progress. States are leaning heavily on universities, nonprofits, and industry groups to expand expertise and implementation capacity.

Politics is not the main divider. Early AI strategies look similar across red, blue, and divided states.

The state role is still evolving. As AI adoption accelerates, states face growing pressure to clarify expectations, strengthen guardrails, and address infrastructure gaps.

From NASBE: States Take Next Steps on Governing AI Use in Schools

As of the end of 2025, 34 states have statewide AI guidance for schools, with more on the way (e.g., Illinois must develop guidance by July 2026).

Ohio was the first state to require every K-12 district to adopt a formal AI use policy, either the state model or a local policy aligned with it, by July 2026.

In 2026, states will likely shift to “clarifying expectations for districts and aligning AI use with broader goals for teaching, learning, workforce readiness, and student well-being.”

State boards will face growing pressure to ensure that AI “reflects a coherent statewide vision that balances innovation with equity, safety, and local control.”

It is encouraging that there is movement, but if states’ focus is still on providing AI guidance, then they are moving too slowly. Without leadership at the national level, we may end up with a patchwork of different AI guidance policies across states.

There are, however, two new AI guidance frameworks, one from the US Department of Labor (not specific to education), the other from AIR (specific to responsible use of AI in education research).

Research

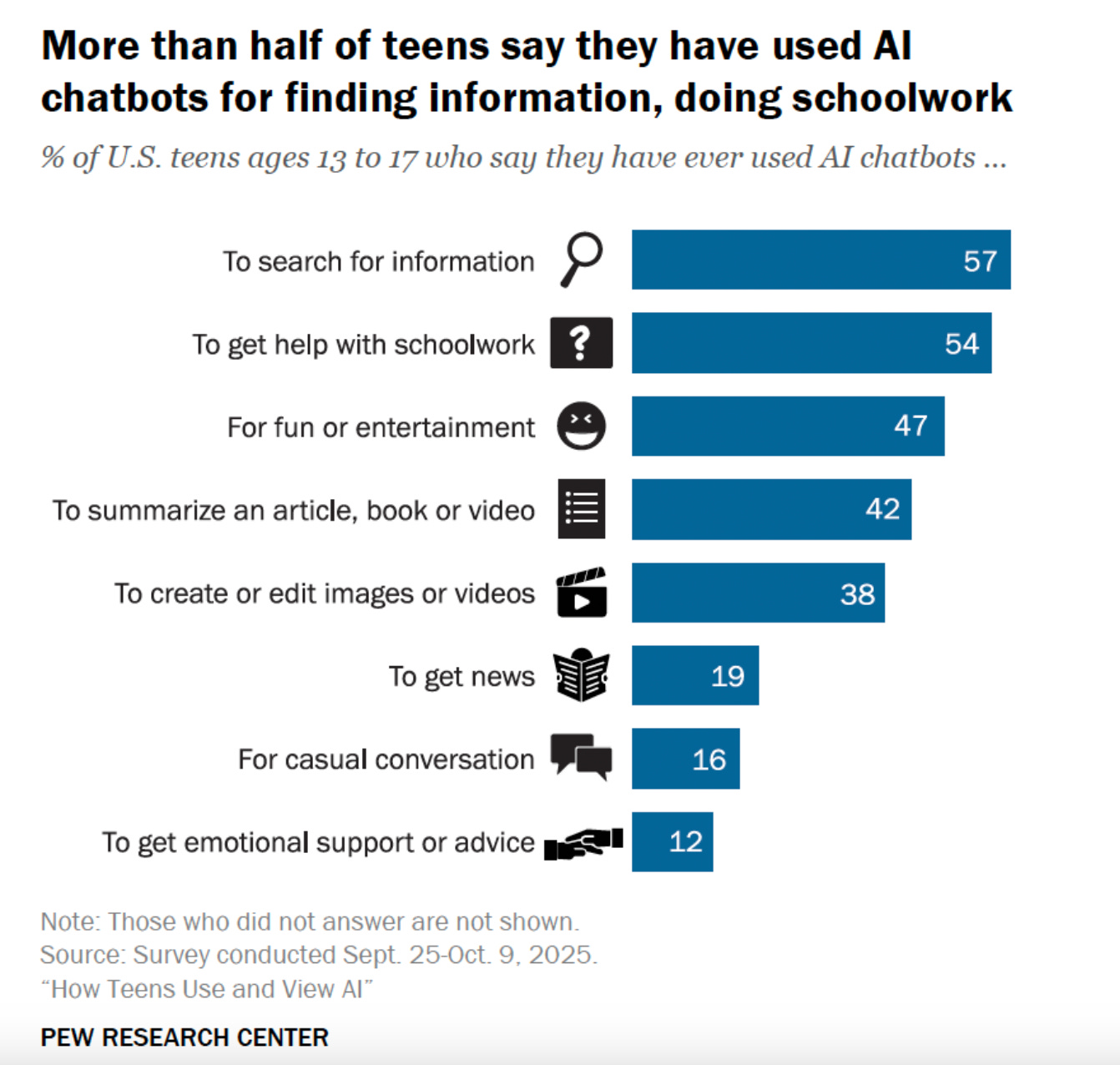

There are two new national surveys of how teens use and feel about AI, one from Pew and the other from the CARE research team at USC. The bottom line:

A majority of teens (just over 50%) say they are using chatbots to find information and help with schoolwork.

A much smaller percentage (12%) say they use AI for emotional support or advice.

Final Words

“The most destructive educational technology we have is the large lecture hall. I would be happy if these technologies forced us to stop putting 400 students in a room…but if we’re really committed to teaching and learning, maybe we’re starting to learn that the transactional model isn’t going to work anymore.”

-Advait Paliwal, Founder, Einstein AI

Thanks to June Han and Dan Silver for tips and contributions. I sometimes use Claude to smooth and edit my writing. However, all errors and em-dashes are mine alone.

This is amazing. I’ve been rolling similar ideas around my head for the past 4 years on individualized engagement & how to create agency from the root of the learning experience. I hope our paths cross one day.

Agree! Good piece.